All the cloud vendors say they’ve been “working on AI for decades,” but Intel was shipping Pentiums that were bad at math in 1994.

When I said “Send a text message to Julian Chokkattu,” who’s a friend and fellow AI Pin reviewer over at Wired, I thought I’d be asked what I wanted to tell him. Instead, the device simply said OK and told me it sent the words “Hey Julian, just checking in. How's your day going?” to Chokkattu. I've never said anything like that to him in our years of friendship, but I guess technically the AI Pin did do what I asked.

Columbia University shut down all in-person classes on Monday, and faculty and staff were encouraged to work remotely. “We need a reset,” President Minouche Shafik said, in reference to what she called the “rancor” around pro-Palestinian rallies on campus, as well as the arrest—with her encouragement—of more than 100 student protesters last week. Also on Monday, Columbia’s office of the provost put out guidance saying that “virtual learning options” should be made available to students in all classes on the university’s main campus until the term ends next week. “Safety is our highest priority,” that statement reads.

By moving its coursework online, the administration has sent an important set of messages to the public. In the midst of what it says is an emergency, the school asserts that it is still delivering its core service to students. It affirms that universities share the public’s perception that education, per se—as opposed to research, entertainment, community-building, or any of the other elements of the college experience—is central to their mission. And it implies that Columbia is carrying out its duties of oversight and care for students.

But those messages don’t quite match up with reality. If the pandemic taught us anything, it’s that “moving classes online” isn’t really possible. A class isn’t just the fact of meeting at a given time, or a teacher imparting information during that meeting, or students’ to receiving and processing such information. A university classroom offers a destination for students on campus, providing an excuse to traverse the quads, backpack on one’s shoulders, realizing a certain image of college life. Once there, the classroom does real work, too. It bounds the space and attention of learning, it creates camaraderie, and it presents opportunities for discourse, flirtation, boredom, and all the other trappings of collegiate fulfillment. Take away the classroom, and what’s left? Often, a limp rehearsal of the act of learning, carried out by awkward or unwilling actors. If the pandemic gave rise to hygiene theater, it also brought us this: pedagogy theater.

The pandemic emergency, at least, offered a reasonable excuse for compromise. A plague was on the loose, and avoiding death took precedence over optimizing teaching quality. But now, with COVID-19 restrictions lifted, the technologies that allowed for pedagogy theater remain. The ubiquity of Zoom and related software, along with the universal familiarity they built up during the pandemic, have made it easy for a provost or a teacher to just shut the doors for any given class—or on any given campus—on a whim, for any reason or no reason. If a professor should get sick or need to travel, or if there is a blizzard, meetings can be held on the internet. In 2023, Iowa State University moved classes online after a power-plant fire shut down its air-conditioning.

[Read: The unreality of Columbia’s ‘liberated zone’]

Columbia’s decision to go virtual because of campus unrest shows the breadth of emergencies that now justify this form of disruption. “Moving classes online” for everyone is a decision that universities can make whenever things go even slightly awry. A pandemic or a deranged gunman could be the cause, as could civil unrest, or just the threat of ice from an anticipated winter storm. Because this decision is portrayed as both temporary and exigent—because Zoom is treated as a fire extinguisher on the wall of every classroom, just in case it’s ever needed—schools are able to maintain their stated faith in the value of matriculating in person. In my experience as a professor who teaches at an elite private university, virtual learning is discouraged under normal circumstances. But as Columbia’s case shows, it might also be used whenever necessary. It’s the best of both worlds for colleges, at least if the goal is to control the stories they tell about themselves.

Online classes are supposed to occupy a middle ground. They are almost always worse than meeting in person, and they may be somewhat better than nothing at all. But that in-between space has turned out to be an uncanny valley for education. If online classes really work, then why not use them all the time? If they really don’t, then why bother using them at all? Answers to these questions vary based on who you ask. Accreditors, which enforce educational standards, may require courses to convene for a certain number of hours. Teachers want to stay on track—but also to take a sick day from time to time, without the pressure to keep working via laptop camera. Students want to be in class so that they can get what they came to college for—except when they want to live their lives instead. And now, amid political turmoil, university leaders want to control the flow of people on and off campus—while still pretending to carry on like normal.

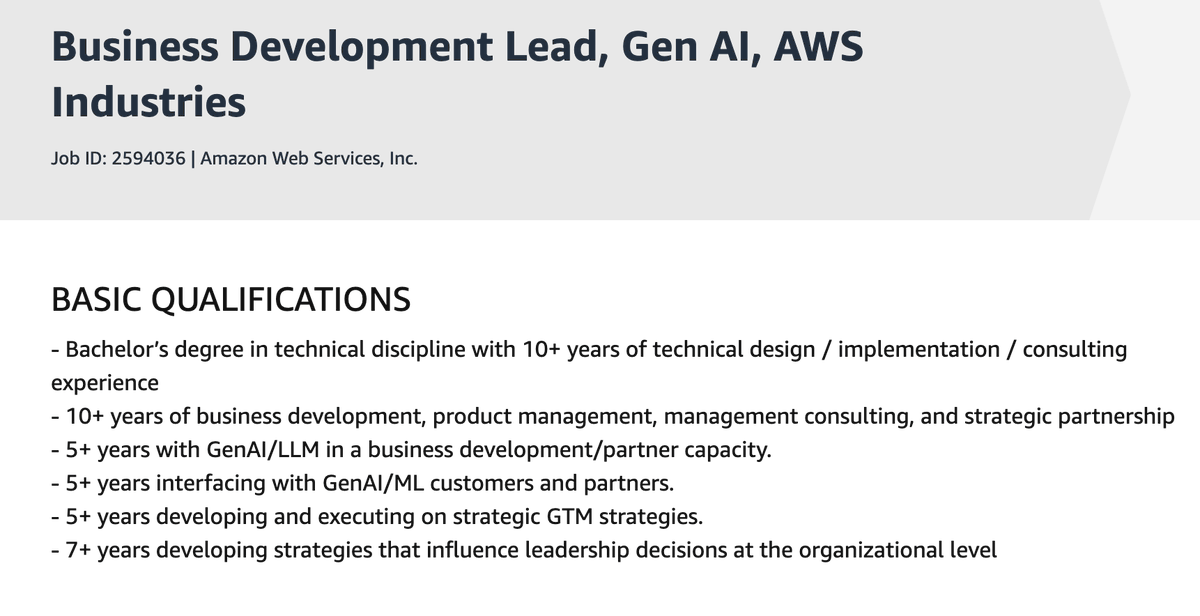

"5+ years experience with GenAI" says the company that's totally not a distant third place in hyperscale cloud AI providers.

"But doctor," sobbed the patient, "I *AM* Andrey Markov!"

Apple released something big three hours ago, and I'm still trying to get my head around exactly what it is.

The parent project is called CoreNet, described as "A library for training deep neural networks". Part of the release is a new LLM called OpenELM, which includes completely open source training code and a large number of published training checkpoint.

I'm linking here to the best documentation I've found of that training data: it looks like the bulk of it comes from RefinedWeb, RedPajama, The Pile and Dolma.